Research Summary

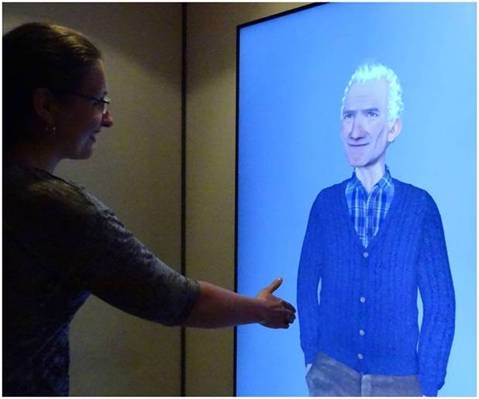

My research focuses on the analysis, modeling, and recognition of social and emotional signals in the context of human-human and human-machine interactions (computer, robot, virtual agent, etc...). In the context of Robotics or Virtual Agents, my work concerns the perception part which designates the ability of the system to collect, process and format information useful to the robot/agent to act and react in the world around him.

My work is mainly based on computer vision, multi-modal fusion and automatic learning applied to the analysis of human behavior and the prediction of its state Emotional and/or mental by a machine.

In summary, I work on the automatic prediction of human characteristics (eg personality), the detection of human behavior (posture, gaze, etc. \dots), detection of the mental and emotional state of the human (Eg, detection of engagement, detection of facial actions and continuous prediction of emotion) in the context of a Man-Virtual Agent or Man-Robot interaction.

Interests

- Artificial Intelligence

- Robotics

- Data Science

- Affective Computing

- Social Signal Processing

- Human-Machine Interaction

- Computer Science

- Social Signal Processing

- Image and Signal Processing

- User-Centered design

Research Projects

-

http://www.rennes.supelec.fr/immemo/

-

-

Principal co-organized international events

-

2018The General Data Protection Regulation: An opportunity for the CHI community? at CHI 2018, Montreal, Canada

-

2015INTERPERSONAL "First International Workshop on Modeling INTERPERsonal SynchrONy" at the International Conference on Multi-modal Interaction (ICMI), Seattle, USA

-

2015First international Workshop on Engagement in HumAN Computer IntEraction" at 6th Affective Computing and Intelligent Interaction (ACII), Xian, China